Monitoring, analysis and evaluation – three very separate components of a consultation

Leave a CommentMonitoring, analysis and evaluation are all very important components of consultation and should not be confused. The important difference is that monitoring occurs throughout the consultation. Analysis, although it can be on-going, takes place (or is complete) at the end of the process. Likewise although tactics can be evaluated while they are in progress, the consultation can only be fully evaluated when complete.

Monitoring

Unlike analysis and evaluation, monitoring need not be a formal, systematic process. Neither does monitoring need to be recorded. It is simply the process by which consultation tactics are observed. The benefits are two-fold: to ensure that tactics are working effectively, and to enable the development team to take part in the dialogue as necessary. While the former is a necessary feature of all good consultations, the extent to which the latter is carried out varies hugely.

Analysis

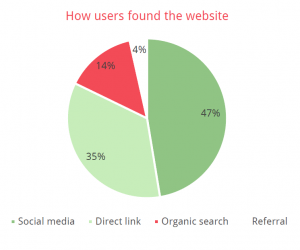

Analysis is the collection of data generated by the consultation – percentages from polls, comments from emails, reports from workshops – and the process of making sense of it. This is both simpler and more effective if the analysis of each tactic is planned in advance. A so-called consultation tactic which does not produce data in a form that can be analysed is counter-productive to the consultation: not only is it a waste of resources but if information is requested which does not then form a meaningful part of the analysis, trust may be destroyed.

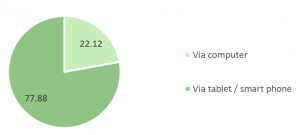

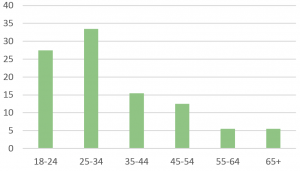

Data usually falls into one of two categories: qualitative or quantitative. Quantitative data can be measured by number. Consequently, analysis tends to be relatively simple. Typically quantitative data may comprise percentages (‘67% of those attending the exhibition supported the introduction of a new footbridge’), quantities (‘546 individuals supported the proposals’) or comparisons (‘Five out of seven members of the committee voted in favour of Design Option 2’). The way to create a more meaningful picture is to cross-tabulate (‘Of the 546 individuals who supported the new footbridge, 87% were daily commuters’). Likewise, quantitative data can be useful for comparison purposes (‘87% local residents supported the new footbridge following the announcement of Design Option 2; prior to this only 61% residents were favourable’) or showing changes in attitudes over time (‘Support for a new footbridge has increased in excess of 10% year on year for the past five years’).

Evaluation

Evaluation is the process by which a consultation is reviewed. Its dual purpose is to demonstrate that an effective consultation has been carried out, and to benefit future consultations. The former gives credibility to the results and can also make sense of any inconsistencies. For example, initial analysis might reveal that 85% local residents support the inclusion of an educational facility at a wind farm development but at a small meeting with local residents, only 10% indicated support for the facility. Evaluation of the process would demonstrate that this particular meeting was instigated by the local ramblers group which adamantly opposed any development on the fields in question and thus although accurate, these results were the view of a minority group and, importantly, opposition to the wind farm in principle, rather than to the educational facility.

Ideally evaluation is formative rather than summative: making sense of the consultation throughout and making changes as necessary, rather than simply assessing it at the end of the process. There is also an argument for evaluation to be carried out externally to allow for objectivity; although the counter-argument is that the process of evaluation is a useful learning experience for the team at the heart of the project.

When planning a consultation, ensure that all three elements are present, but that they are clearly identified at specific steps in the process and as such can’t be confused.

Penny Norton

Penny’s book Public Consultation and Community Involvement in Planning: a twenty-first century guide is published by Routledge. It is available online through Routledge, Amazon and other bookshops.